Apache Phoenix Reference Guide

Version 5.3.0

Apache Phoenix Team

Apache Phoenix Documentation

Complete Apache Phoenix documentation in one continuous page.

Overview

OLTP and operational analytics for Apache Hadoop

Download

Download latest Apache Phoenix binary and source release artifacts

Issues

Browse Apache Phoenix JIRAs

Source

Sync and build Apache Phoenix from source code

Apache Phoenix enables OLTP and operational analytics in Hadoop for low-latency applications by combining:

- the power of standard SQL and JDBC APIs, with full ACID transaction capabilities

- the flexibility of late-bound, schema-on-read capabilities from the NoSQL world by leveraging HBase as its backing store

Apache Phoenix is fully integrated with other Hadoop products such as Spark, Hive, Pig, Flume, and MapReduce.

Who is using Apache Phoenix? Read more here.

Mission

Become the trusted data platform for OLTP and operational analytics for Hadoop through well-defined, industry-standard APIs.

Quick Start

Tired of reading and just want to get started? Take a look at our FAQs, listen to the Apache Phoenix talk from Hadoop Summit 2015, review the overview presentation, and jump to our quick start guide here.

SQL Support

Apache Phoenix takes your SQL query, compiles it into a series of HBase scans, and orchestrates those scans to produce regular JDBC result sets. Direct use of the HBase API, along with coprocessors and custom filters, results in performance on the order of milliseconds for small queries, or seconds for tens of millions of rows.

To see a complete list of what is supported, go to our language reference. All standard SQL query constructs are supported, including SELECT, FROM, WHERE, GROUP BY, HAVING, ORDER BY, etc. It also supports a full set of DML commands, as well as table creation and versioned incremental alterations through DDL commands.

Here is a list of what is currently not supported:

- Relational operators:

INTERSECT,MINUS - Miscellaneous built-in functions: these are easy to add — read this blog for step-by-step instructions.

Connection

Use JDBC to get a connection to an HBase cluster:

Connection conn = DriverManager.getConnection("jdbc:phoenix:server1,server2:3333", props);Where props are optional properties that may include Phoenix and HBase configuration values.

The JDBC connection string is composed as:

jdbc:phoenix[:<zookeeper quorum>[:<port number>[:<root node>[:<principal>[:<keytab file>]]]]]For omitted parts, values are taken from hbase-site.xml (hbase.zookeeper.quorum, hbase.zookeeper.property.clientPort, and zookeeper.znode.parent).

The optional principal and keytab file may be used to connect to a Kerberos-secured cluster. If only principal is specified, each distinct user gets a dedicated HBase connection (HConnection), allowing multiple different connections with different configuration properties on the same JVM.

For example, for longer-running queries:

Connection conn = DriverManager.getConnection("jdbc:phoenix:my_server:longRunning", longRunningProps);And for shorter-running queries:

Connection conn = DriverManager.getConnection("jdbc:phoenix:my_server:shortRunning", shortRunningProps);See the relevant FAQ entry for example URLs.

Phoenix also supports connecting to HBase without ZooKeeper.

Transactions

To enable full ACID transactions (beta in 4.7.0), set phoenix.transactions.enabled=true. In this case, you also need to run the transaction manager included in the distribution. Once enabled, a table may optionally be declared as transactional (see transactions).

Commits over transactional tables are all-or-none: either all data is committed (including secondary index updates) or none is committed (and an exception is thrown). Both cross-table and cross-row transactions are supported. Transactional tables also see their own uncommitted data when querying. An optimistic concurrency model is used to detect row-level conflicts with first-commit-wins semantics.

Non-transactional tables have no guarantees beyond HBase row-level atomicity (see HBase ACID semantics). Also, non-transactional tables do not see updates until commit occurs.

Phoenix DML commands (UPSERT VALUES, UPSERT SELECT, DELETE) batch pending changes on the client side. Changes are sent to server on commit and discarded on rollback. If auto-commit is enabled, Phoenix will execute the entire DML command server-side via coprocessors whenever possible for better performance.

Timestamps

Most applications let HBase manage timestamps. In cases where timestamps must be controlled, the CurrentSCN property can be set at connection time to control timestamps for DDL, DML, and queries. This also enables snapshot queries against prior row values because Phoenix uses this property as scan max timestamp.

Timestamps cannot be controlled for transactional tables. Instead, the transaction manager assigns timestamps that become HBase cell timestamps on commit. They still correspond to wall-clock time, but are multiplied by 1,000,000 to ensure enough granularity for uniqueness across the cluster.

Schema

Apache Phoenix supports table creation and versioned incremental alterations through DDL commands. Table metadata is stored in an HBase table and versioned, so snapshot queries over prior versions automatically use the correct schema.

A Phoenix table can be created through CREATE TABLE and can either be:

- Built from scratch: HBase table and column families are created automatically.

- Mapped to an existing HBase table: either as a read-write TABLE or a read-only VIEW, with the caveat that row key/key-value binary representation must match Phoenix data types (see Data Types).

- For a read-write TABLE, column families are created automatically if absent. An empty key value is added to the first column family of each existing row to minimize projection size for queries.

- For a read-only VIEW, all column families must already exist. The only change is adding Phoenix coprocessors for query processing. The primary use case is transferring existing data into Phoenix; DML is not allowed on a VIEW, and query performance may be lower than with a TABLE.

All schema is versioned (up to 1000 versions kept). Snapshot queries over older data pick up the correct schema based on connection time via CurrentSCN.

Altering

A Phoenix table may be altered via ALTER TABLE. When a SQL statement references a table, Phoenix checks with the server by default to ensure metadata and statistics are up to date.

If table structure is known to be stable, this RPC may be unnecessary. UPDATE_CACHE_FREQUENCY (added in 4.7) lets users define how often the server is checked for metadata/statistics updates. Possible values: ALWAYS (default), NEVER, or a millisecond value.

For example, this DDL creates table FOO and tells clients to check for updates every 15 minutes:

CREATE TABLE FOO (k BIGINT PRIMARY KEY, v VARCHAR) UPDATE_CACHE_FREQUENCY=900000;Views

Phoenix supports updatable views on top of tables, with the unique capability of adding columns by leveraging HBase schemaless behavior. All views share the same underlying HBase table and may be indexed independently. Read more here.

Multi-tenancy

Built on top of view support, Phoenix also supports multi-tenancy. As with views, a multi-tenant view can add columns defined solely for that user.

Schema at read time

Another schema-related feature allows columns to be defined dynamically at query time. This is useful when not all columns are known at create time. More details here.

Mapping to an Existing HBase Table

Phoenix supports mapping to an existing HBase table through CREATE TABLE and CREATE VIEW. In both cases, HBase metadata remains as-is, except that with CREATE TABLE, KEEP_DELETED_CELLS is enabled so flashback queries work correctly.

For CREATE TABLE, missing HBase metadata (table/column families) is created if needed. Table and column family names are case-sensitive at HBase level; Phoenix uppercases names by default. To preserve case sensitivity, wrap names in double quotes:

CREATE VIEW "MyTable" ("a".ID VARCHAR PRIMARY KEY);For CREATE TABLE, an empty key value is added per row so queries behave as expected without projecting all columns during scans. For CREATE VIEW, this is not done and no HBase metadata is created. Existing HBase metadata must match DDL metadata, or ERROR 505 (42000): Table is read only is thrown.

Another caveat: bytes serialized in HBase must match Phoenix serialization expectations.

- For

VARCHAR,CHAR, andUNSIGNED_*types, Phoenix uses HBaseBytesutility methods. CHARexpects only single-byte characters.UNSIGNED_*expects non-negative values.

Composite row keys are formed by concatenating values, with a zero byte separator after variable-length types. For more on type system details, see Data Types.

Salting

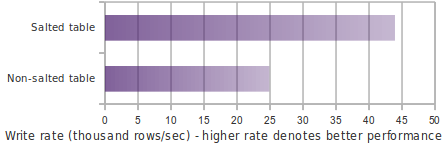

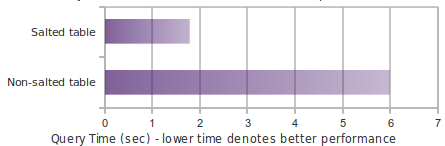

Tables can be declared salted to avoid HBase region hotspotting. Declare a salt bucket count and Phoenix manages salting transparently. See details here, and write-throughput comparison here.

APIs

Catalog metadata (tables, columns, primary keys, and types) can be retrieved through Java SQL metadata interfaces: DatabaseMetaData, ParameterMetaData, and ResultSetMetaData.

For schema/table/column retrieval via DatabaseMetaData, schema pattern, table pattern, and column pattern are LIKE-style expressions (% and _, escaped by \).

In metadata APIs, table catalog argument is used to filter by tenant ID for multi-tenant tables.

Quick Start

What is this new Phoenix thing I've been hearing about?

Phoenix is an open source SQL skin for HBase. You use the standard JDBC APIs instead of the regular HBase client APIs to create tables, insert data, and query your HBase data.

Doesn't putting an extra layer between my application and HBase just slow things down?

Actually, no. Phoenix achieves as good or likely better performance than if you hand-coded it yourself (not to mention with a heck of a lot less code) by:

- compiling your SQL queries to native HBase scans

- determining the optimal start and stop for your scan key

- orchestrating the parallel execution of your scans

- bringing the computation to the data by

- pushing the predicates in your where clause to a server-side filter

- executing aggregate queries through server-side hooks (called co-processors)

- secondary indexes to improve performance for queries on non row key columns

- stats gathering to improve parallelization and guide choices between optimizations

- skip scan filter to optimize IN, LIKE, and OR queries

- optional salting of row keys to evenly distribute write load

Ok, so it's fast. But why SQL? It's so 1970s

Well, that's kind of the point: give folks something with which they're already familiar. What better way to spur the adoption of HBase? On top of that, using JDBC and SQL:

- Reduces the amount of code users need to write

- Allows for performance optimizations transparent to the user

- Opens the door for leveraging and integrating lots of existing tooling

But how can SQL support my favorite HBase technique of x,y,z

Didn't make it to the last HBase Meetup did you? SQL is just a way of expressing what you want to get not how you want to get it. Check out my presentation for various existing and to-be-done Phoenix features to support your favorite HBase trick. Have ideas of your own? We'd love to hear about them: file an issue for us and/or join our mailing list.

Blah, blah, blah - I just want to get started!

Ok, great! Just follow our install instructions:

- download and expand our installation binary tar corresponding to your HBase version

- copy the phoenix server jar into the lib directory of every region server and master

- restart HBase

- add the phoenix client jar to the classpath of your JDBC client or application

- We have detailed instructions for setting up SQuirreL SQL as your SQL client

I don't want to download and setup anything else!

Ok, fair enough - you can create your own SQL scripts and execute them using our command line tools instead. Let's walk through an example now. Begin by navigating to the bin/ directory of your Phoenix install location.

First, let's create a us_population.sql file, containing a table definition:

CREATE TABLE IF NOT EXISTS us_population (

state CHAR(2) NOT NULL,

city VARCHAR NOT NULL,

population BIGINT

CONSTRAINT my_pk PRIMARY KEY (state, city)

);Now let's create a us_population.csv file containing some data to put in that table:

NY,New York,8143197

CA,Los Angeles,3844829

IL,Chicago,2842518

TX,Houston,2016582

PA,Philadelphia,1463281

AZ,Phoenix,1461575

TX,San Antonio,1256509

CA,San Diego,1255540

TX,Dallas,1213825

CA,San Jose,912332Execute the following command from a command terminal to create and populate the table

./psql.py <your_zookeeper_quorum> us_population.sql us_population.csvStart the interactive sql client

./sqlline.py <your_zookeeper_quorum>and issue a query

SELECT state as "State",count(city) as "City Count",sum(population) as "Population Sum"

FROM us_population

GROUP BY state

ORDER BY sum(population) DESC;Congratulations! You've just created your first Phoenix table, inserted data into it, and executed an aggregate query with just a few lines of code in 15 minutes or less!

Big deal - 10 rows! What else you got?

Ok, ok - tough crowd. Check out our bin/performance.py script to create as many rows as you want, for any schema you come up with, and run timed queries against it.

Why is it called Phoenix anyway? Did some other project crash and burn and this is the next generation?

I'm sorry, but we're out of time and space, so we'll have to answer that next time!

FAQ

Questions answered in this page:

- I want to get started. Is there a Phoenix Hello World?

- What is the Phoenix JDBC URL syntax?

- Is there a way to bulk load in Phoenix?

- How I map Phoenix table to an existing HBase table?

- Are there any tips for optimizing Phoenix?

- How do I create Secondary Index on a table?

- Why isn't my secondary index being used?

- How fast is Phoenix? Why is it so fast?

- How do I connect to secure HBase cluster?

- What HBase and Hadoop versions are supported?

- Can phoenix work on tables with arbitrary timestamp as flexible as HBase API?

- Why isn't my query doing a RANGE SCAN?

- Should I pool Phoenix JDBC Connections?

- Why does Phoenix add an empty or dummy KeyValue when doing an upsert?

I want to get started. Is there a Phoenix Hello World?

Pre-requisite: Download and install the latest Phoenix.

Using console

Start Sqlline:

$ sqlline.py [zookeeper quorum hosts]Execute the following statements when Sqlline connects:

create table test (mykey integer not null primary key, mycolumn varchar);

upsert into test values (1,'Hello');

upsert into test values (2,'World!');

select * from test;You should get the following output:

+-------+------------+

| MYKEY | MYCOLUMN |

+-------+------------+

| 1 | Hello |

| 2 | World! |

+-------+------------+Using Java

Create test.java file with the following content:

import java.sql.Connection;

import java.sql.DriverManager;

import java.sql.ResultSet;

import java.sql.SQLException;

import java.sql.PreparedStatement;

import java.sql.Statement;

public class test {

public static void main(String[] args) throws SQLException {

Statement stmt = null;

ResultSet rset = null;

Connection con = DriverManager.getConnection("jdbc:phoenix:[zookeeper quorum hosts]");

stmt = con.createStatement();

stmt.executeUpdate("create table test (mykey integer not null primary key, mycolumn varchar)");

stmt.executeUpdate("upsert into test values (1,'Hello')");

stmt.executeUpdate("upsert into test values (2,'World!')");

con.commit();

PreparedStatement statement = con.prepareStatement("select * from test");

rset = statement.executeQuery();

while (rset.next()) {

System.out.println(rset.getString("mycolumn"));

}

statement.close();

con.close();

}

}Compile and execute on command line

$ javac test.java

$ java -cp "../phoenix-[version]-client.jar:." testYou should get the following output

Hello

World!What is the Phoenix JDBC URL syntax?

Thick Driver

See Using the Phoenix JDBC Driver for a more up-to-date description

The Phoenix (Thick) Driver JDBC URL syntax is as follows (where elements in square brackets are optional):

jdbc:phoenix:[comma-separated ZooKeeper Quorum Hosts [: ZK port [:hbase root znode [:kerberos_principal [:path to kerberos keytab] ] ] ]The simplest URL is:

jdbc:phoenixWhereas the most complicated URL is:

jdbc:phoenix:zookeeper1.domain,zookeeper2.domain,zookeeper3.domain:2181:/hbase-1:phoenix@EXAMPLE.COM:/etc/security/keytabs/phoenix.keytabPlease note that each optional element in the URL requires all previous optional elements. For example, to specify the HBase root ZNode, the ZooKeeper port must also be specified.

See also Connection String.

Thin Driver

The Phoenix Thin Driver (used with the Phoenix Query Server) JDBC URL syntax is as follows:

jdbc:phoenix:thin:[key=value[;key=value...]]There are a number of keys exposed for client-use. The most commonly-used keys are: url and serialization. The url

key is required to interact with the Phoenix Query Server.

The simplest URL is:

jdbc:phoenix:thin:url=http://localhost:8765Where as very complicated URL is:

jdbc:phoenix:thin:url=http://queryserver.domain:8765;serialization=PROTOBUF;authentication=SPENGO;principal=phoenix@EXAMPLE.COM;keytab=/etc/security/keytabs/phoenix.keytabPlease refer to the Apache Avatica documentation for a full list of supported options in the Thin client JDBC URL, or see the Query Server documentation

Is there a way to bulk load in Phoenix?

Map Reduce

See the example here

CSV

CSV data can be bulk loaded with built in utility named psql. Typical upsert rates are 20K - 50K rows per second (depends on how wide are the rows).

Usage example:

-

Create table using psql:

$ psql.py [zookeeper] ../examples/web_stat.sql -

Upsert CSV bulk data:

$ psql.py [zookeeper] ../examples/web_stat.csv

How I map Phoenix table to an existing HBase table?

You can create both a Phoenix table or view through the CREATE TABLE/CREATE VIEW DDL statement on a pre-existing HBase table. In both cases, we'll leave the HBase metadata as-is. For CREATE TABLE, we'll create any metadata (table, column families) that doesn't already exist. We'll also add an empty key value for each row so that queries behave as expected (without requiring all columns to be projected during scans).

The other caveat is that the way the bytes were serialized must match the way the bytes are serialized by Phoenix. For VARCHAR, CHAR, and UNSIGNED_* types, we use the HBase Bytes methods. The CHAR type expects only single-byte characters and the UNSIGNED types expect values greater than or equal to zero. For signed types (TINYINT, SMALLINT, INTEGER and BIGINT), Phoenix will flip the first bit so that negative values will sort before positive values. Because HBase sorts row keys in lexicographical order and negative value's first bit is 1 while positive 0 so that negative value is 'greater than' positive value if we don't flip the first bit. So if you stored integers by HBase native API and want to access them by Phoenix, make sure that all your data types are UNSIGNED types.

Our composite row keys are formed by simply concatenating the values together, with a zero byte character used as a separator after a variable length type.

If you create an HBase table like this:

create 't1', {NAME => 'f1', VERSIONS => 5}then you have an HBase table with a name of t1 and a column family with a name of f1. Remember, in HBase, you don't model the possible KeyValues or the structure of the row key. This is the information you specify in Phoenix above and beyond the table and column family.

So in Phoenix, you'd create a view like this:

CREATE VIEW "t1" ( pk VARCHAR PRIMARY KEY, "f1".val VARCHAR )The pk column declares that your row key is a VARCHAR (i.e. a string) while the "f1".val column declares that your HBase table will contain KeyValues with a column family and column qualifier of "f1":VAL and that their value will be a VARCHAR.

Note that you don't need the double quotes if you create your HBase table with all caps names (since this is how Phoenix normalizes strings, by upper casing them). For example, with:

create 'T1', {NAME => 'F1', VERSIONS => 5}you could create this Phoenix view:

CREATE VIEW t1 ( pk VARCHAR PRIMARY KEY, f1.val VARCHAR )Or if you're creating new HBase tables, just let Phoenix do everything for you like this (No need to use the HBase shell at all.):

CREATE TABLE t1 ( pk VARCHAR PRIMARY KEY, val VARCHAR )Are there any tips for optimizing Phoenix?

-

Use Salting to increase read/write performance

Salting can significantly increase read/write performance by pre-splitting the data into multiple regions. Although Salting will yield better performance in most scenarios.

Example:CREATE TABLE TEST (HOST VARCHAR NOT NULL PRIMARY KEY, DESCRIPTION VARCHAR) SALT_BUCKETS=16Note: Ideally for a 16 region server cluster with quad-core CPUs, choose salt buckets between 32-64 for optimal performance.

-

Pre-split table

Salting does automatic table splitting but in case you want to exactly control where table split occurs with out adding extra byte or change row key order then you can pre-split a table.

Example:CREATE TABLE TEST (HOST VARCHAR NOT NULL PRIMARY KEY, DESCRIPTION VARCHAR) SPLIT ON ('CS','EU','NA') -

Use multiple column families

Column family contains related data in separate files. If you query use selected columns then it make sense to group those columns together in a column family to improve read performance.

Example:

Following create table DDL will create two column faimiles A and B.CREATE TABLE TEST (MYKEY VARCHAR NOT NULL PRIMARY KEY, A.COL1 VARCHAR, A.COL2 VARCHAR, B.COL3 VARCHAR) -

Use compression

On disk compression improves performance on large tables

Example:CREATE TABLE TEST (HOST VARCHAR NOT NULL PRIMARY KEY, DESCRIPTION VARCHAR) COMPRESSION='GZ' -

Create indexes See How do I connect to secure HBase cluster?

-

Optimize cluster parameters See https://hbase.apache.org/docs/performance

-

Optimize Phoenix parameters See Configuration

How do I create Secondary Index on a table?

Starting with Phoenix version 2.1, Phoenix supports index over mutable and immutable data. Note that Phoenix 2.0.x only supports Index over immutable data. Index write performance index with immutable table is slightly faster than mutable table however data in immutable table cannot be updated.

Example:

- Create table

Immutable table:create table test (mykey varchar primary key, col1 varchar, col2 varchar) IMMUTABLE_ROWS=true;

Mutable table:create table test (mykey varchar primary key, col1 varchar, col2 varchar); - Creating index on col2

create index idx on test (col2) - Creating index on col1 and a covered index on col2

create index idx on test (col1) include (col2)

Upsert rows in this test table and Phoenix query optimizer will choose correct index to use. You can see in explain plan if Phoenix is using the index table. You can also give a hint in Phoenix query to use a specific index.

See Secondary Indexing for further information

Why isn't my secondary index being used?

The secondary index won't be used unless all columns used in the query are in it ( as indexed or covered columns). All columns making up the primary key of the data table will automatically be included in the index.

Example: DDL create table usertable (id varchar primary key, firstname varchar, lastname varchar); create index idx_name on usertable (firstname);

Query: DDL select id, firstname, lastname from usertable where firstname = 'foo';

Index would not be used in this case as lastname is not part of indexed or covered column. This can be verified by looking at the explain plan. To fix this create index that has either lastname part of index or covered column. Example: create idx_name on usertable (firstname) include (lastname);

You can force Phoenix to use secondary for uncovered columns by specifying an index hint

How fast is Phoenix? Why is it so fast?

Phoenix is fast. Full table scan of 100M rows usually completes in 20 seconds (narrow table on a medium sized cluster). This time come down to few milliseconds if query contains filter on key columns. For filters on non-key columns or non-leading key columns, you can add index on these columns which leads to performance equivalent to filtering on key column by making copy of table with indexed column(s) part of key.

Why is Phoenix fast even when doing full scan:

- Phoenix chunks up your query using the region boundaries and runs them in parallel on the client using a configurable number of threads.

- The aggregation will be done in a coprocessor on the server-side, collapsing the amount of data that gets returned back to the client rather than returning it all.

How do I connect to secure HBase cluster?

Specify the principal and corresponding keytab in the JDBC URL as show above. For ancient Phoenix versions heck out the excellent post by Anil Gupta

What HBase and Hadoop versions are supported?

Phoenix 4.x supports HBase 1.x running on Hadoop 2

Phoenix 5.x supports HBase 2.x running on Hadoop 3

See the release notes and BUILDING in recent releases for the exact versions supported, and on how to build Phoenix for specific HBase and Hadoop versions

Can phoenix work on tables with arbitrary timestamp as flexible as HBase API?

By default, Phoenix lets HBase manage the timestamps and just shows you the latest values for everything. However, Phoenix also allows arbitrary timestamps to be supplied by the user. To do that you'd specify a CurrentSCN at connection time, like this:

Properties props = new Properties();

props.setProperty("CurrentSCN", Long.toString(ts));

Connection conn = DriverManager.connect(myUrl, props);

conn.createStatement().execute("UPSERT INTO myTable VALUES ('a')");

conn.commit();The above is equivalent to doing this with the HBase API:

myTable.put(Bytes.toBytes('a'), ts);By specifying a CurrentSCN, you're telling Phoenix that you want everything for that connection to be done at that timestamp. Note that this applies to queries done on the connection as well - for example, a query over myTable above would not see the data it just upserted, since it only sees data that was created before its CurrentSCN property. This provides a way of doing snapshot, flashback, or point-in-time queries.

Keep in mind that creating a new connection is not an expensive operation. The same underlying HConnection is used for all connections to the same cluster, so it's more or less like instantiating a few objects.

Why isn't my query doing a RANGE SCAN?

CREATE TABLE TEST (

pk1 char(1) not null,

pk2 char(1) not null,

pk3 char(1) not null,

non-pk varchar,

CONSTRAINT PK PRIMARY KEY(pk1, pk2, pk3)

);RANGE SCAN means that only a subset of the rows in your table will be scanned over. This occurs if you use one or more leading columns from your primary key constraint. Query that is not filtering on leading PK columns ex. select * from test where pk2='x' and pk3='y'; will result in full scan whereas the following query will result in range scan select * from test where pk1='x' and pk2='y';. Note that you can add a secondary index on your pk2 and pk3 columns and that would cause a range scan to be done for the first query (over the index table).

DEGENERATE SCAN means that a query can't possibly return any rows. If we can determine that at compile time, then we don't bother to even run the scan.

FULL SCAN means that all rows of the table will be scanned over (potentially with a filter applied if you have a WHERE clause)

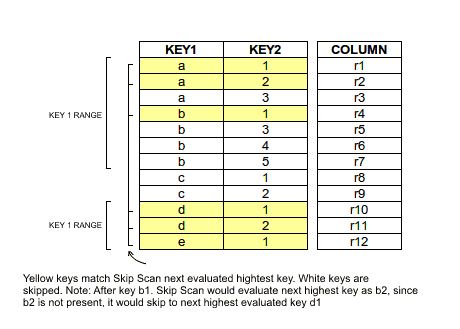

SKIP SCAN means that either a subset or all rows in your table will be scanned over, however it will skip large groups of rows depending on the conditions in your filter. See this blog for more detail. We don't do a SKIP SCAN if you have no filter on the leading primary key columns, but you can force a SKIP SCAN by using the /*+ SKIP_SCAN */ hint. Under some conditions, namely when the cardinality of your leading primary key columns is low, it will be more efficient than a FULL SCAN.

Should I pool Phoenix JDBC Connections?

No, it is not necessary to pool Phoenix JDBC Connections.

Phoenix's Connection objects are different from most other JDBC Connections due to the underlying HBase connection. The Phoenix Connection object is designed to be a thin object that is inexpensive to create. If Phoenix Connections are reused, it is possible that the underlying HBase connection is not always left in a healthy state by the previous user. It is better to create new Phoenix Connections to ensure that you avoid any potential issues.

Implementing pooling for Phoenix could be done simply by creating a delegate Connection that instantiates a new Phoenix connection when retrieved from the pool and then closes the connection when returning it to the pool (see PHOENIX-2388).

Why does Phoenix add an empty/dummy KeyValue when doing an upsert?

The empty or dummy KeyValue (with a column qualifier of _0) is needed to ensure that a given column is available

for all rows.

As you may know, data is stored in HBase as KeyValues, meaning that

the full row key is stored for each column value. This also implies

that the row key is not stored at all unless there is at least one

column stored.

Now consider JDBC row which has an integer primary key, and several

columns which are all null. In order to be able to store the primary

key, a KeyValue needs to be stored to show that the row is present at

all. This column is represented by the empty column that you've

noticed. This allows doing a SELECT * FROM TABLE and receiving

records for all rows, even those whose non-pk columns are null.

The same issue comes up even if only one column is null for some (or all) records. A scan over Phoenix will include the empty column to ensure that rows that only consist of the primary key (and have null for all non-key columns) will be included in a scan result.

Building

Building the Main Phoenix Project

Phoenix consists of several subprojects.

The core project is phoenix, which depends on phoenix-thirdparty, phoenix-omid, and phoenix-tephra.

phoenix-queryserver and phoenix-connectors are optional packages that also depend on phoenix.

Check out the source and follow the build instructions in BUILDING.md (or README.md) in the repository root.

Using Phoenix in a Maven Project

Phoenix artifacts are published to Apache and Maven Central repositories. Add the dependency below to your pom.xml:

<dependencies>

<dependency>

<groupId>org.apache.phoenix</groupId>

<artifactId>phoenix-client-hbase-[hbase.profile]</artifactId>

<version>[phoenix.version]</version>

</dependency>

</dependencies>Where:

[phoenix.version]is the Phoenix release version (for example,5.1.2or4.16.1)[hbase.profile]is the compatible HBase profile

See Downloads for supported release/profile combinations.

Branches

The main Phoenix project currently has two active branches.

4.xworks with HBase 1 and Hadoop 25.xworks with HBase 2 and Hadoop 3

See Downloads and BUILDING.md for exact version compatibility by release.

See also:

Client Classpath and JDBC URL

Using the Phoenix JDBC Driver

This page is about using the Phoenix thick client.

The thin client for Phoenix Query Server is described on its own page.

The Phoenix classpath

To use Phoenix, both the JDBC driver JAR and hbase-site.xml must be added to the application classpath.

Phoenix driver JAR

The Phoenix JDBC client is built on top of the HBase client, and has an unusually high number of dependencies. To make this manageable, Phoenix provides a single shaded uberjar that can be added to the classpath.

Phoenix uses some private and semi-public HBase APIs, which may change between HBase versions, and provides separate binary distributions for different HBase versions.

Choose the binary distribution or Maven artifact corresponding to the HBase version on your cluster.

Copy the driver JAR from the binary distribution.

Copy the corresponding phoenix-client-embedded-hbase-[hbase.profile]-[phoenix.version].jar to the application classpath.

Add the dependency via Maven.

<dependencies>

<dependency>

<groupId>org.apache.phoenix</groupId>

<artifactId>phoenix-client-embedded-hbase-[hbase.profile]</artifactId>

<version>[phoenix.version]</version>

</dependency>

</dependencies>hbase-site.xml from your target cluster to the classpath.HBase / Hadoop configuration files

As Phoenix is built on top of the HBase client, it needs the HBase configuration files for correct operation. For some configurations, it may also need other Hadoop / HDFS config files like core-site.xml.

Download the correct hbase-site.xml (the client one, usually in /etc/hbase/conf) from the cluster, and copy it to a directory on the classpath.

It is important to add the directory containing hbase-site.xml, and not the full file path, to the classpath.

Alternatively, package hbase-site.xml into the root directory of a JAR file and add that JAR to the classpath.

If hbase-site.xml changes on the cluster, make sure to copy the updated file to your application classpath.

For some development clusters that use default configuration Phoenix may work without this, but not having the correct hbase-site.xml on the

classpath is almost guaranteed to cause problems.

The Phoenix JDBC URL

The Phoenix URL contains two main parts. The first describes the connection to HBase; the second specifies extra Phoenix options.

jdbc:<protocol variant>[:<server list>[:<port>[:<zk root node>[:<principal>[:<keytab file>]]]]][;<option>=<value>]*protocol variant: The HBase connection registry to use (details below).server list: A comma-separated list of hostnames or IPv4 addresses. It is also possible to specify per-host ports, as defined in HBASE-12706. In this case:characters must be escaped with\. You may need to escape again in Java source strings.port: An integer port number. Ports specified inserver listtake precedence.zk root node: The root znode for HBase. Must be empty for non-ZK registries.principal: The Kerberos principal used for authentication.

If onlyprincipalis specified, this defines a distinct user identity with its own dedicated HBase connection (HConnection) and allows multiple differently configured connections in the same JVM.keytab: Kerberos keytab used for authentication. Must be specified together withprincipal.option: A connection option.value: A connection option value.

Parameters from end of the connection definition can be omitted.

Use empty strings for missing parameters in the middle of the URL.

For example, the jdbc:phoenix::::principal:/home/user/keytab URL can be used to specify the kerberos principal and keytab, while using the default connection specified in hbase-site.xml.

Default connection

The underlying HBase client identifies the cluster based on parameters in hbase-site.xml.

While Phoenix allows overriding this, it is usually best to use the cluster definition from hbase-site.xml.

The only time the connection should be directly specified is when switching between otherwise identically configured HBase instances, like a production and a disaster recovery cluster.

To use the defaults from hbase-site.xml, use the jdbc:phoenix URL or jdbc:phoenix;option=value if additional options are needed.

See HBase documentation for how each registry is configured in hbase-site.xml.

The jdbc:phoenix: protocol variant

If this protocol variant is specified, Phoenix will select the registry based on the value of hbase.client.registry.impl.

If hbase.client.registry.impl is not defined, Phoenix chooses a default based on the HBase client version it includes.

The jdbc:phoenix+zk: protocol variant

This uses the original ZooKeeper-based HBase connection registry. The server list and port specify the ZK quorum. HBASE-12706 is supported; : characters must be escaped with \.

Examples:

jdbc:phoenix+zk:localhost:2181:/hbase:principal:keytab- fully specifiedjdbc:phoenix+zk:host1\:2181,host1\:2182,host2\:2183- heterogeneous ports, default ZK root nodejdbc:phoenix+zk- use default ZK parameters fromhbase-site.xml(usingjdbc:phoenixis preferred in most cases)

The jdbc:phoenix+master: protocol variant

This uses the Master based connection registry added in HBASE-18095, and is available from HBase 2.3.0. The zk root node parameter must never be specified.

Examples:

jdbc:phoenix+master:master1\:16001,master2\:16002::principal:/path/to/keytab- fully specifiedjdbc:phoenix+master:master1,master2- use default master port for both hosts

The jdbc:phoenix+rpc: protocol variant

This uses the Master based connection registry added in HBASE-26150, and is available from HBase 2.5.0.

This is very similar to the phoenix+master variant, but also allows specifying RegionServers in the host list.

There is no built-in default port for this registry, the port must always be specified together with the host list.

Examples:

jdbc:phoenix+rpc:server1\:16001,server2\:16002::principal:/path/to/keytab- fully specifiedjdbc:phoenix+rpc- use values fromhbase-site.xml

Notes

Support for master and rpc registries is only available in Phoenix 5.1.4+

and 5.2.0+.

Earlier versions support only the jdbc:phoenix: protocol variant implementing the original HBase ZooKeeper connection registry.

Support for registry variants is only available for HBase versions that support them. Phoenix will throw an error if a variant that the HBase client version doesn't support is specified.

Phoenix 5.2 also supports High Availability connections. Documentation for that is only available in the JIRA ticket.

Tuning Guide

Tuning Phoenix can be complex, but with a little knowledge of how it works you can make significant improvements to read and write performance. The most important factor is schema design, especially how it affects underlying HBase row keys. See "General Tips" below for design guidance based on anticipated data access patterns. Subsequent sections describe how to use secondary indexes, hints, and explain plans.

Note: Phoenix and HBase work well when your application does point lookups and small range scans. This can be achieved by good primary key design (see below). If you find that your application requires many full table scans, then Phoenix and HBase are likely not the best tool for the job. Instead, look at using other tools that write to HDFS directly using columnar representations such as Parquet.

Primary Keys

The underlying row key design is the single most important factor in Phoenix performance, and it's important to get it right at design time because you cannot change it later without re-writing the data and index tables.

The Phoenix primary keys are concatenated to create the underlying row key in Apache HBase. Choose and order primary key columns to align with common query patterns. The leading key column has the greatest performance impact. For example, if you lead with a column containing org IDs, it is easy to select all rows for a specific org. You can also add the HBase row timestamp to the primary key to improve scan efficiency by skipping rows outside the queried time range.

Every primary key imposes a cost because the entire row key is appended to every piece of data in memory and on disk. The larger the row key, the greater the storage overhead. Find ways to store information compactly in columns you plan to use for primary keys — store deltas instead of complete time stamps, for example.

To sum up, the best practice is to design primary keys to add up to a row key that lets you scan the smallest amount of data.

Tip: When choosing primary keys, lead with the column you filter most frequently across the queries that are most important to optimize. If you use ORDER BY, make sure your PK columns match expressions in the ORDER BY clause.

Monotonically increasing Primary keys

If your primary keys are monotonically increasing, use salting to help distribute writes across the cluster and improve parallelization. Example:

CREATE TABLE … ( … ) SALT_BUCKETS = N

For optimal performance the number of salt buckets should approximately equal the number of region servers. Do not salt automatically. Use salting only when experiencing hotspotting. The downside of salting is that it imposes a cost on read because when you want to query the data you have to run multiple queries to do a range scan.

General Tips

The following sections provide a few general tips for different access scenarios.

Is the Data Random-Access?

- As with any random read workloads, SSDs can improve performance because of their faster random seek time.

Is the data read-heavy or write-heavy?

- For read-heavy data:

- Create global indexes. This will affect write speed depending on the number of columns included in an index because each index writes to its own separate table.

- Use multiple indexes to provide fast access to common queries.

- When specifying machines for HBase, do not skimp on cores; HBase needs them.

- For write-heavy data:

- Pre-split the table. It can be helpful to split the table into predefined regions, or if keys are monotonically increasing, use salting to avoid creating write hotspots on a small number of nodes. Use real data types rather than raw byte data.

- Create local indexes. Reads from local indexes have a performance penalty, so it's important to do performance testing. See the Pherf tool.

Which columns will be accessed often?

- Choose commonly-queried columns as primary keys. For more information, see “Primary Keys” below.

- Create additional indexes to support common query patterns, including heavily accessed fields that are not in the primary key.

Can the data be append-only (immutable)?

- If the data is immutable or append-only, declare the table and its indexes as immutable using the

IMMUTABLE_ROWSoption at creation time to reduce the write-time cost. If you need to make an existing table immutable, you can do so withALTER TABLE trans.event SET IMMUTABLE_ROWS=trueafter creation time.- If speed is more important than data integrity, you can use the

DISABLE_WALoption. Note: it is possible to lose data withDISABLE_WALif a region server fails.

- If speed is more important than data integrity, you can use the

- Set the

UPDATE_CACHE_FREQUENCYoption to 15 minutes or so if your metadata doesn't change very often. This property determines how often an RPC is done to ensure you're seeing the latest schema. - If the data is not sparse (over 50% of the cells have values), use the SINGLE_CELL_ARRAY_WITH_OFFSETS data encoding scheme introduced in Phoenix 4.10, which obtains faster performance by reducing the size of the data. For more information, see “Column Mapping and Immutable Data Encoding” on the Apache Phoenix blog.

Is the table very large?

- Use the

ASYNCkeyword with yourCREATE INDEXcall to create the index asynchronously via MapReduce job. You'll need to manually start the job; see Index Population for details. - If the data is too large to scan the table completely, use primary keys to create an underlying composite row key that makes it easy to return a subset of the data or facilitates skip-scanning — Phoenix can jump directly to matching keys when the query includes key sets in the predicate.

Is transactionality required?

A transaction is a data operation that is atomic — that is, guaranteed to succeed completely or not at all. For example, if you need to make cross-row updates to a data table, then you should consider your data transactional.

- If you need transactionality, use the

TRANSACTIONALoption. (See also Transactions)

Block Encoding

Using compression or encoding is a must. Both SNAPPY and FAST_DIFF are good all around options.

FAST_DIFF encoding is automatically enabled on all Phoenix tables by default, and almost always improves overall read latencies and throughput by allowing more data to fit into blockcache. Note: FAST_DIFF encoding can increase garbage produced during request processing.

Set encoding at table creation time. Example:

CREATE TABLE … ( … ) DATA_BLOCK_ENCODING=‘FAST_DIFF’

Schema Design

Because the schema affects the way the data is written to the underlying HBase layer, Phoenix performance relies on the design of your tables, indexes, and primary keys.

Phoenix and the HBase data model

HBase stores data in tables, which in turn contain columns grouped in column families. A row in an HBase table consists of versioned cells associated with one or more columns. An HBase row is a collection of many key-value pairs in which the rowkey attribute of the keys are equal. Data in an HBase table is sorted by the rowkey, and all access is via the rowkey. Phoenix creates a relational data model on top of HBase, enforcing a PRIMARY KEY constraint whose columns are concatenated to form the row key for the underlying HBase table. For this reason, it's important to be cognizant of the size and number of the columns you include in the PK constraint, because a copy of the row key is included with every cell in the underlying HBase table.

Column Families

If some columns are accessed more frequently than others, create multiple column families to separate the frequently-accessed columns from rarely-accessed columns. This improves performance because HBase reads only the column families specified in the query.

Columns

Here are a few tips that apply to columns in general, whether they are indexed or not:

- Keep

VARCHARcolumns under 1MB or so due to I/O costs. When processing queries, HBase materializes cells in full before sending them over to the client, and the client receives them in full before handing them off to the application code. - For structured objects, don't use JSON, which is not very compact. Use a format such as protobuf, Avro, msgpack, or BSON.

- Consider compressing data before storage using a fast LZ variant to cut latency and I/O costs.

- Use the column mapping feature (added in Phoenix 4.10), which uses numerical HBase column qualifiers for non-PK columns instead of directly using column names. This improves performance when looking for a cell in the sorted list of cells returned by HBase, adds further across-the-board performance by reducing the disk size used by tables, and speeds up DDL operations like column rename and metadata-level column drops. For more information, see “Column Mapping and Immutable Data Encoding” on the Apache Phoenix blog.

Indexes

A Phoenix index is a physical table that stores a pivoted copy of some or all of the data in the main table to serve specific query patterns. When you issue a query, Phoenix automatically selects the best index. The primary index is created automatically based on selected primary keys. You can create secondary indexes by specifying included columns based on expected query patterns.

See also: Secondary Indexing

Secondary indexes

Secondary indexes can improve read performance by turning what would normally be a full table scan into a point lookup (at the cost of storage space and write speed). Secondary indexes can be added or removed after table creation and don't require changes to existing queries – queries simply run faster. A small number of secondary indexes is often sufficient. Depending on your needs, consider creating covered indexes or functional indexes, or both.

If your table is large, use ASYNC with CREATE INDEX to create indexes asynchronously. In this case, index build runs through MapReduce, so client restarts will not impact index creation and jobs can be retried automatically if needed. You still need to start the job manually, and then monitor it like any other MapReduce job.

Example:

CREATE INDEX IF NOT EXISTS event_object_id_idx_b

ON trans.event (object_id)

ASYNC UPDATE_CACHE_FREQUENCY = 60000;See Index Population for details.

If you cannot create the index asynchronously, increase query timeout (phoenix.query.timeoutMs) to exceed expected index build time. If CREATE INDEX times out or the client goes down before completion, the build stops and must be run again. You can monitor the index table during creation: new regions appear as splits occur. You can query SYSTEM.STATS (populated by splits/compactions), or run COUNT(*) against the index table (higher load because it requires a full scan).

Tips:

-

Create local indexes for write-heavy use cases.

-

Create global indexes for read-heavy use cases. To save read-time overhead, consider creating covered indexes.

-

If the primary key is monotonically increasing, create salt buckets. The salt buckets can't be changed later, so design them to handle future growth. Salt buckets help avoid write hotspots, but can decrease overall throughput due to the additional scans needed on read.

-

Set up a cron job to build indexes. Use

ASYNCwithCREATE INDEXto avoid blocking. -

Only create the indexes you need.

-

Limit the number of indexes on frequently updated tables.

-

Use covered indexes to convert table scans into efficient point lookups or range queries over the index table instead of the primary table:

CREATE INDEX idx ON table_name ( ... ) INCLUDE ( ... );

Queries

It's important to know which queries execute on the server side versus client side, because this affects performance due to network I/O and other bottlenecks. If you're querying a billion-row table, you want as much computation as possible on the server side instead of transmitting rows to the client. Some queries must still execute on the client. Sorting data that resides on multiple region servers, for example, requires aggregation and re-sort on the client.

Reading

- Avoid joins unless one side is small, especially on frequent queries. For larger joins, see “Hints,” below.

- In the

WHEREclause, filter leading columns in the primary key constraint. - Filtering the first leading column with

INorORin theWHEREclause enables skip scan optimizations. - Equality or comparisons (

<or>) in theWHEREclause enable range-scan optimizations. - Let Phoenix optimize query parallelism using statistics. This provides an automatic benefit if using Phoenix 4.2 or greater in production.

See also: Joins

Range Queries

If you regularly scan large data sets from spinning disk, you're best off with GZIP (but watch write speed). Use a lot of cores for a scan to utilize the available memory bandwidth. Apache Phoenix makes it easy to utilize many cores to increase scan performance.

For range queries, the HBase block cache does not provide much advantage.

Large Range Queries

For large range queries, consider setting Scan.setCacheBlocks(false) even if the whole scan could fit into the block cache.

If you mostly perform large range queries you might even want to consider running HBase with a much smaller heap and size the block cache down, to only rely on the OS Cache. This will alleviate some garbage collection related issues.

Point Lookups

For point lookups it is quite important to have your data set cached, and you should use the HBase block cache.

Hints

Hints let you override default query processing behavior and specify such factors as which index to use, what type of scan to perform, and what type of join to use.

- During the query, Hint global index if you want to force it when query includes a column not in the index.

- If necessary, you can do bigger joins with the

/*+ USE_SORT_MERGE_JOIN */hint, but a big join will be an expensive operation over huge numbers of rows. - If the overall size of all right-hand-side tables would exceed the memory size limit, use the

/*+ NO_STAR_JOIN */hint.

See also: Hint.

Explain plans

An EXPLAIN plan tells you a lot about how a query will be run. To generate an explain plan run this query and to interpret the plan, see this reference.

Parallelization

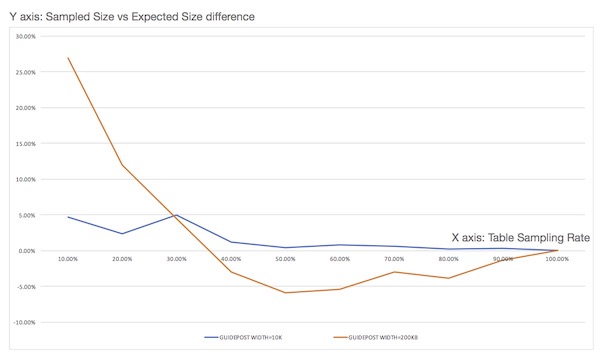

You can improve parallelization with the UPDATE STATISTICS command. This command subdivides each region by determining keys called guideposts that are equidistant from each other, then uses these guideposts to break up queries into multiple parallel scans. Statistics are turned on by default. With Phoenix 4.9, the user can set guidepost width for each table. Optimal guidepost width depends on a number of factors such as cluster size, cluster usage, number of cores per node, table size, and disk I/O.

In Phoenix 4.12, configuration phoenix.use.stats.parallelization was added to control whether statistics are used to drive parallelization. Stats collection can still run regardless. Collected information is also used to estimate bytes and rows scanned when generating EXPLAIN.

Writing

Updating data with UPSERT VALUES

When using UPSERT VALUES to write a large number of records, turn off autocommit and batch records in reasonably small batches (try 100 rows and adjust from there to fine-tune performance).

Note: With the default fat driver, executeBatch() does not provide benefit. Instead, update multiple rows by executing UPSERT VALUES multiple times and then use commit() to submit the batch. With the thin driver, however, use executeBatch() to minimize RPCs between the client and query server.

try (Connection conn = DriverManager.getConnection(url)) {

conn.setAutoCommit(false);

int batchSize = 0;

int commitSize = 1000; // number of rows you want to commit per batch.

try (PreparedStatement stmt = conn.prepareStatement(upsert)) {

// set params...

while (/* there are records to upsert */) {

stmt.executeUpdate();

batchSize++;

if (batchSize % commitSize == 0) {

conn.commit();

}

}

conn.commit(); // commit the last batch of records

}Note: Because the Phoenix client keeps uncommitted rows in memory, be careful not to set commitSize too high.

Updating data with UPSERT SELECT

When using UPSERT SELECT to write many rows in a single statement, turn on autocommit and the rows will be automatically batched according to the phoenix.mutate.batchSize. This will minimize the amount of data returned back to the client and is the most efficient means of updating many rows.

Deleting data

When deleting a large data set, turn on autoCommit before issuing the DELETE query so that the client does not need to remember the row keys of all the keys as they are deleted. This prevents the client from buffering the rows affected by the DELETE so that Phoenix can delete them directly on the region servers without the expense of returning them to the client.

Reducing RPC traffic

To reduce RPC traffic, set UPDATE_CACHE_FREQUENCY (4.7 or above) on your tables and indexes when creating them (or via ALTER TABLE/ALTER INDEX). See Altering.

Using local indexes

If using 4.8, consider using local indexes to minimize the write time. In this case, the writes for the secondary index will be to the same region server as your base table. This approach does involve a performance hit on the read side, though, so make sure to quantify both write speed improvement and read speed reduction.

Further tuning

For advice about tuning the underlying HBase and JVM layers, see Operational and Performance Configuration Options in the Apache HBase™ Reference Guide.

Special Cases

The following sections provide Phoenix-specific additions to the tuning recommendations in the Apache HBase™ Reference Guide section referenced above.

For applications where failing quickly is better than waiting

In addition to the HBase tuning referenced above, set phoenix.query.timeoutMs in hbase-site.xml on the client side to the maximum tolerable wait time in milliseconds.

For applications that can tolerate slightly out of date information

In addition to the HBase tuning referenced above, set phoenix.connection.consistency = timeline in hbase-site.xml on the client side for all connections.

Installation

Installation

To install a pre-built Phoenix, use these directions:

- Download and expand the latest

phoenix-hbase-[hbase.version]-[phoenix.version]-bin.tar.gzfor your HBase version. - Add

phoenix-server-hbase-[hbase.version]-[phoenix.version].jarto the classpath of all HBase region servers and masters, and remove any previous version. An easy way is to copy it into the HBaselibdirectory. - Restart HBase.

- Add

phoenix-client-hbase-[hbase.version]-[phoenix.version].jarto the classpath of any JDBC client.

To install Phoenix from source:

- Download and expand the latest

phoenix-[phoenix.version]-src.tar.gzfor your HBase version, or check it out from the main source repository. - Follow the build instructions in

BUILDING.mdin the root directory of the source distribution/repository to build the binary assembly. - Follow the instructions above, but use the assembly built from source.

Getting Started

Want to get started quickly? Take a look at our FAQs and quick start guide here.

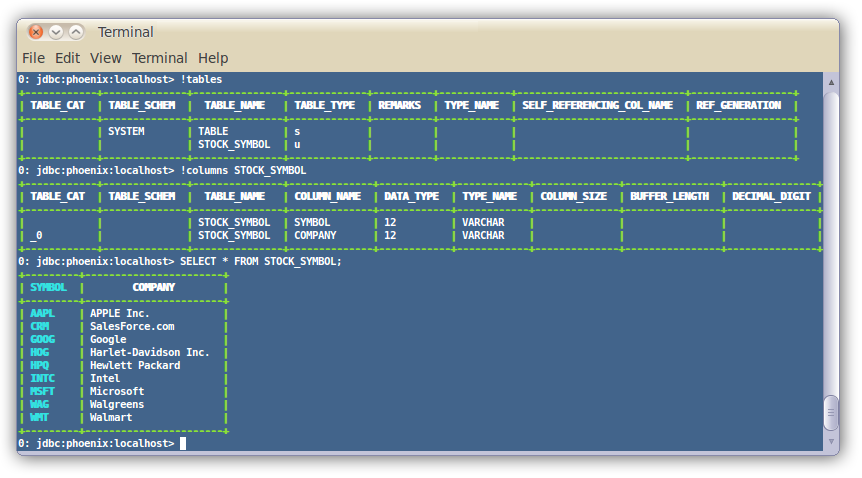

Command Line

A terminal interface to execute SQL from the command line is now bundled with Phoenix. To start it, execute the following from the bin directory:

$ sqlline.py [zk quorum hosts]To execute SQL scripts from the command line, you can include a SQL file argument like this:

$ sqlline.py [zk quorum hosts] ../examples/stock_symbol.sql

For more information, see the manual.

Loading Data

In addition, you can use bin/psql.py to load CSV data or execute SQL scripts. For example:

$ psql.py localhost ../examples/web_stat.sql ../examples/web_stat.csv ../examples/web_stat_queries.sqlOther alternatives include:

- Using our map-reduce based CSV loader for bigger data sets

- Mapping an existing HBase table to a Phoenix table and using the UPSERT SELECT command to populate a new table.

- Populating the table through our UPSERT VALUES command.

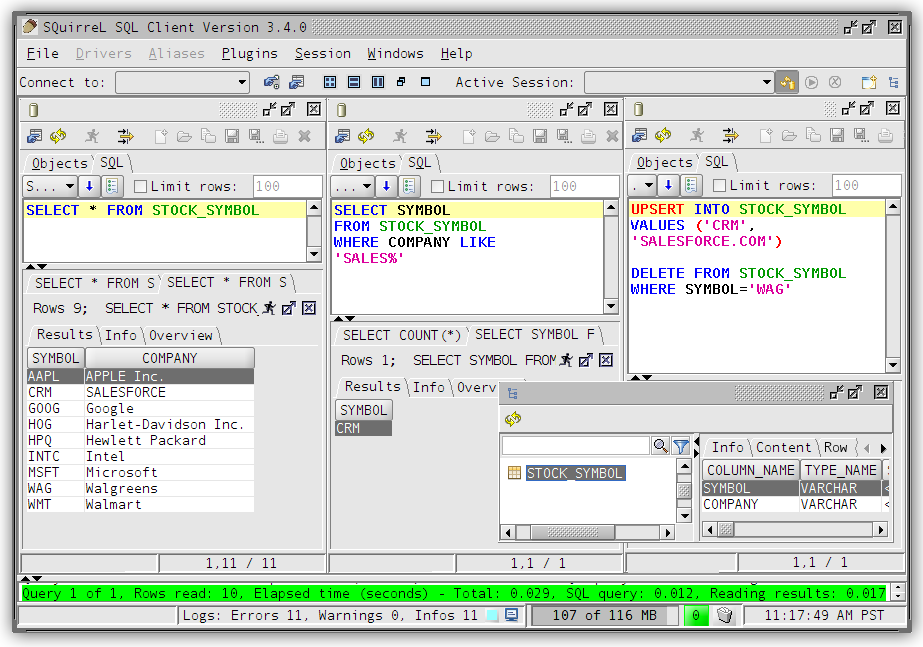

SQuirreL SQL Client

If you'd rather use a client GUI to interact with Phoenix, download and install SQuirrel. Since Phoenix is a JDBC driver, integration with tools such as this are seamless. Here are the setup steps necessary:

Remove prior phoenix-[_oldversion_]-client.jar from the SQuirreL lib

directory, then copy phoenix-[_newversion_]-client.jar there (_newversion_

should match the Phoenix server jar used with your HBase installation).

Drivers -> New Driver).In Add Driver, set Name to Phoenix, and set the Example URL to

jdbc:phoenix:localhost.

Enter org.apache.phoenix.jdbc.PhoenixDriver into the Class Name field and

click OK.

Switch to Alias tab and create a new Alias (Aliases -> New Alias).

In the dialog, use Name: any name, Driver: Phoenix, User Name: anything,

Password: anything.

Construct URL as follows: jdbc:phoenix:[zookeeper quorum server]. For

example, to connect to local HBase use jdbc:phoenix:localhost.

Press Test (it should succeed if everything is set up correctly), then press OK to close.

Double-click your newly created Phoenix alias and click Connect. You are now ready to run SQL queries against Phoenix.

Through SQuirreL, you can issue SQL statements in the SQL tab (create tables, insert data, run queries), and inspect table metadata in the Object tab (for example, list tables, columns, primary keys, and types).

Note that most graphical clients that support generic JDBC drives should also work, and the setup process is usually similar.

Samples

The best place to see samples are in our unit tests under src/test/java. The ones in the endToEnd package are tests demonstrating how to use all aspects of the Phoenix JDBC driver. We also have some examples in the examples directory.

Configuration

Configuration

Phoenix provides many different knobs and dials to configure and tune the system to run more optimally on your cluster. The configuration is done through a series of Phoenix-specific properties specified both on client and server-side hbase-site.xml files. In addition to these properties, there are of course all the HBase configuration properties with the most important ones documented here.

The table below outlines the full set of Phoenix-specific configuration properties and their defaults.

| Property | Description | Default |

|---|---|---|

data.tx.snapshot.dir | Server-side property specifying the HDFS directory used to store snapshots of the transaction state. No default value. | None |

data.tx.timeout | Server-side property specifying the timeout in seconds for a transaction to complete. Default is 30 seconds. | 30 |

phoenix.query.timeoutMs | Client-side property specifying the number of milliseconds after which a query will timeout on the client. Default is 10 min. | 600000 |

phoenix.query.keepAliveMs | Maximum time in milliseconds that excess idle threads will wait for new tasks before terminating when the number of threads is greater than the cores in the client side thread pool executor. Default is 60 sec. | 60000 |

phoenix.query.threadPoolSize | Number of threads in client side thread pool executor. As the number of machines/cores in the cluster grows, this value should be increased. | 128 |

phoenix.query.queueSize | Max queue depth of the bounded round robin backing the client side thread pool executor, beyond which an attempt to queue additional work is rejected. If zero, a SynchronousQueue is used instead of the bounded round robin queue. The default value is 5000. | 5000 |

phoenix.stats.guidepost.width | Server-side parameter that specifies the number of bytes between guideposts. A smaller amount increases parallelization, but also increases the number of chunks which must be merged on the client side. The default value is 100 MB. | 104857600 |

phoenix.stats.guidepost.per.region | Server-side parameter that specifies the number of guideposts per region. If set to a value greater than zero, then the guidepost width is determined by MAX_FILE_SIZE of table / phoenix.stats.guidepost.per.region. Otherwise, if not set, then the phoenix.stats.guidepost.width parameter is used. No default value. | None |

phoenix.stats.updateFrequency | Server-side parameter that determines the frequency in milliseconds for which statistics will be refreshed from the statistics table and subsequently used by the client. The default value is 15 min. | 900000 |

phoenix.stats.minUpdateFrequency | Client-side parameter that determines the minimum amount of time in milliseconds that must pass before statistics may again be manually collected through another UPDATE STATISTICS call. The default value is phoenix.stats.updateFrequency / 2. | 450000 |

phoenix.stats.useCurrentTime | Server-side parameter that if true causes the current time on the server-side to be used as the timestamp of rows in the statistics table when background tasks such as compactions or splits occur. If false, then the max timestamp found while traversing the table over which statistics are being collected is used as the timestamp. Unless your client is controlling the timestamps while reading and writing data, this parameter should be left alone. The default value is true. | true |

phoenix.query.spoolThresholdBytes | Threshold size in bytes after which results from parallelly executed query results are spooled to disk. Default is 20 mb. | 20971520 |

phoenix.query.maxSpoolToDiskBytes | Threshold size in bytes up to which results from parallelly executed query results are spooled to disk above which the query will fail. Default is 1 GB. | 1024000000 |

phoenix.query.maxGlobalMemoryPercentage | Percentage of total heap memory (i.e. Runtime.getRuntime().maxMemory()) that all threads may use. Only coarse-grained memory usage is tracked, mainly accounting for memory usage in the intermediate map built during group by aggregation. When this limit is reached clients block while attempting to get more memory, essentially throttling memory usage. Defaults to 15% | 15 |

phoenix.query.maxGlobalMemorySize | Max size in bytes of total tracked memory usage. By default not specified, however, if present, the lower of this parameter and phoenix.query.maxGlobalMemoryPercentage will be used. | |

phoenix.query.maxGlobalMemoryWaitMs | Maximum amount of time that a client will block while waiting for more memory to become available. After this amount of time, an InsufficientMemoryException is thrown. Default is 10 sec. | 10000 |

phoenix.query.maxTenantMemoryPercentage | Maximum percentage of phoenix.query.maxGlobalMemoryPercentage that any one tenant is allowed to consume. After this percentage, an InsufficientMemoryException is thrown. Default is 100% | 100 |

phoenix.query.dateFormat | Default pattern to use for conversion of a date to/from a string, whether through the TO_CHAR(<date>) or TO_DATE(<date-string>) functions, or through resultSet.getString(<date-column>). Default is yyyy-MM-dd HH:mm:ss.SSS | yyyy-MM-dd HH:mm:ss.SSS |

phoenix.query.dateFormatTimeZone | A timezone id that specifies the default time zone in which date, time, and timestamp literals should be interpreted when interpreting string literals or using the TO_DATE function. A time zone id can be a timezone abbreviation such as "PST", or a full name such as "America/Los_Angeles", or a custom offset such as "GMT-9:00". The time zone id "LOCAL" can also be used to interpret all date, time, and timestamp literals as being in the current timezone of the client. | GMT |

phoenix.query.timeFormat | Default pattern to use for conversion of TIME to/from a string, whether through the TO_CHAR(<time>) or TO_TIME(<time-string>) functions, or through resultSet.getString(<time-column>). Default is yyyy-MM-dd HH:mm:ss.SSS | yyyy-MM-dd HH:mm:ss.SSS |

phoenix.query.timestampFormat | Default pattern to use for conversion of TIMESTAMP to/from a string, whether through the TO_CHAR(<timestamp>) or TO_TIMESTAMP(<timestamp-string>) functions, or through resultSet.getString(<timestamp-column>). Default is yyyy-MM-dd HH:mm:ss.SSS | yyyy-MM-dd HH:mm:ss.SSS |

phoenix.query.numberFormat | Default pattern to use for conversion of a decimal number to/from a string, whether through the TO_CHAR(<decimal-number>) or TO_NUMBER(<decimal-string>) functions, or through resultSet.getString(<decimal-column>). Default is #,##0.### | #,##0.### |

phoenix.mutate.maxSize | The maximum number of rows that may be batched on the client before a commit or rollback must be called. | 500000 |

phoenix.mutate.batchSize | The number of rows that are batched together and automatically committed during the execution of an UPSERT SELECT or DELETE statement. This property may be overridden at connection time by specifying the UpsertBatchSize property value. Note that the connection property value does not affect the batch size used by the coprocessor when these statements are executed completely on the server side. | 1000 |

phoenix.query.maxServerCacheBytes | Maximum size (in bytes) of a single sub-query result (usually the filtered result of a table) before compression and conversion to a hash map. Attempting to hash an intermediate sub-query result of a size bigger than this setting will result in a MaxServerCacheSizeExceededException. Default 100MB. | 104857600 |

phoenix.coprocessor.maxServerCacheTimeToLiveMs | Maximum living time (in milliseconds) of server caches. A cache entry expires after this amount of time has passed since last access. Consider adjusting this parameter when a server-side IOException("Could not find hash cache for joinId") happens. Getting warnings like "Earlier hash cache(s) might have expired on servers" might also be a sign that this number should be increased. | 30000 |

phoenix.query.useIndexes | Client-side property determining whether or not indexes are considered by the optimizer to satisfy a query. Default is true | true |

phoenix.index.failure.handling.rebuild | Server-side property determining whether or not a mutable index is rebuilt in the background in the event of a commit failure. Only applicable for indexes on mutable, non transactional tables. Default is true | true |

phoenix.index.failure.block.write | Server-side property determining whether or not writes to the data table are disallowed in the event of a commit failure until the index can be caught up with the data table. Requires that phoenix.index.failure.handling.rebuild is true as well. Only applicable for indexes on mutable, non transactional tables. Default is false | false |

phoenix.index.failure.handling.rebuild.interval | Server-side property controlling the millisecond frequency at which the server checks whether or not a mutable index needs to be partially rebuilt to catch up with updates to the data table. Only applicable for indexes on mutable, non transactional tables. Default is 10 seconds. | 10000 |

phoenix.index.failure.handling.rebuild.overlap.time | Server-side property controlling how many milliseconds to go back from the timestamp at which the failure occurred to go back when a partial rebuild is performed. Only applicable for indexes on mutable, non transactional tables. Default is 1 millisecond. | 1 |

phoenix.index.mutableBatchSizeThreshold | Number of mutations in a batch beyond which index metadata will be sent as a separate RPC to each region server as opposed to included inline with each mutation. Defaults to 5. | 5 |

phoenix.schema.dropMetaData | Determines whether or not an HBase table is dropped when the Phoenix table is dropped. Default is true | true |

phoenix.groupby.spillable | Determines whether or not a GROUP BY over a large number of distinct values is allowed to spill to disk on the region server. If false, an InsufficientMemoryException will be thrown instead. Default is true | true |

phoenix.groupby.spillFiles | Number of memory mapped spill files to be used when spilling GROUP BY distinct values to disk. Default is 2 | 2 |

phoenix.groupby.maxCacheSize | Size in bytes of pages cached during GROUP BY spilling. Default is 100Mb | 102400000 |

phoenix.groupby.estimatedDistinctValues | Number of estimated distinct values when a GROUP BY is performed. Used to perform initial sizing with growth of 1.5x each time reallocation is required. Default is 1000 | 1000 |

phoenix.distinct.value.compress.threshold | Size in bytes beyond which aggregate operations which require tracking distinct value counts (such as COUNT DISTINCT) will use Snappy compression. Default is 1Mb | 1024000 |

phoenix.index.maxDataFileSizePerc | Percentage used to determine the MAX_FILESIZE for the shared index table for views relative to the data table MAX_FILESIZE. The percentage should be estimated based on the anticipated average size of a view index row versus the data row. Default is 50%. | 50 |

phoenix.coprocessor.maxMetaDataCacheTimeToLiveMs | Time in milliseconds after which the server-side metadata cache for a tenant will expire if not accessed. Default is 30mins | 180000 |

phoenix.coprocessor.maxMetaDataCacheSize | Max size in bytes of total server-side metadata cache after which evictions will begin to occur based on least recent access time. Default is 20Mb | 20480000 |

phoenix.client.maxMetaDataCacheSize | Max size in bytes of total client-side metadata cache after which evictions will begin to occur based on least recent access time. Default is 10Mb | 10240000 |

phoenix.sequence.cacheSize | Number of sequence values to reserve from the server and cache on the client when the next sequence value is allocated. Only used if not defined by the sequence itself. Default is 100 | 100 |

phoenix.clock.skew.interval | Delay interval(in milliseconds) when opening SYSTEM.CATALOG to compensate possible time clock skew when SYSTEM.CATALOG moves among region servers. | 2000 |

phoenix.index.failure.handling.rebuild | Boolean flag which turns on/off auto-rebuild a failed index from when some updates are failed to be updated into the index. | true |

phoenix.index.failure.handling.rebuild.interval | Time interval(in milliseconds) for index rebuild backend Job to check if there is an index to be rebuilt | 10000 |

phoenix.index.failure.handling.rebuild.overlap.time | Index rebuild job builds an index from when it failed - the time interval(in milliseconds) in order to create a time overlap to prevent missing updates when there exists time clock skew. | 300000 |

phoenix.query.force.rowkeyorder | Whether or not a non aggregate query returns rows in row key order for salted tables. For version prior to 4.4, use phoenix.query.rowKeyOrderSaltedTable instead. Default is true. | true |

phoenix.connection.autoCommit | Whether or not a new connection has auto-commit enabled when it is created. | false |

phoenix.table.default.store.nulls | The default value of the STORE_NULLS flag used for table creation which determines whether or not null values should be explicitly stored in HBase. This is a client side parameter. Available starting from Phoenix 4.3. | false |

phoenix.table.istransactional.default | The default value of the TRANSACTIONAL flag used for table creation which determines whether or not a table is transactional . This is a client side parameter. Available starting from Phoenix 4.7. | false |

phoenix.transactions.enabled | Determines whether or not transactions are enabled in Phoenix. A table may not be declared as transactional if transactions are disabled. This is a client side parameter. Available starting from Phoenix 4.7. | false |

phoenix.mapreduce.split.by.stats | Determines whether to use the splits determined by statistics for MapReduce input splits. Default is true. This is a server side parameter. Available starting from Phoenix 4.10. Set to false to enable behavior from previous versions. | true |

phoenix.log.level | Client-side property enabling query (only SELECT statement) logging. The logs are written to the SYSTEM.LOG table (requires a user to have W access on SYSTEM.LOG table). Possible values: OFF = No logging; INFO = Enables query logging; DEBUG = More details on Query (Explain plan, HBase Scan Details etc); TRACE = Logs query bind parameters as well. Available starting from Phoenix 4.14. WARNING: Enabling this feature may leak sensitive information to anyone who can access the SYSTEM.LOG table. | OFF |

phoenix.log.sample.rate | Client-side property controlling the probability of logging a query to the query log. Set to a value between 0.0(no query) and 1.0(100% queries) . Available starting from Phoenix 4.14. | 1.0 |

Backward Compatibility

Phoenix maintains backward compatibility across at least two minor releases to allow for no downtime through server-side rolling restarts upon upgrading. See below for details.

Versioning Convention

Phoenix uses a standard three number versioning schema of the form:

<major version> . <minor version> . <patch version>For example, 4.2.1 has a major version of 4,

a minor version of 2, and a patch version of 1.

Patch Release

Upgrading to a new patch release (i.e. only the patch version has changed) is the simplest case. The jar upgrade may occur in any order: client first or server first, and a mix of clients with different patch release versions is fine.

Minor Release

When upgrading to a new minor release (i.e. the major version is the same, but the minor version has changed), sometimes modifications to the system tables are necessary to either fix a bug or provide a new feature. This upgrade will occur automatically the first time a newly upgraded client connects to the newly upgraded server. It is required that the server-side jar be upgraded first across your entire cluster, before any clients are upgraded. An older client (two minor versions back) will work with a newer server jar when the minor version is different, but not vice versa. In other words, clients do not need to be upgraded in lock step with the server. However, as the server version moves forward, the client version should move forward as well. This allows Phoenix to evolve its client/server protocol while still providing clients sufficient time to upgrade their clients.

As of the 4.3 release, a mix of clients on different minor release versions is supported as well (note that prior releases required all clients to be upgraded at the same time). Another improvement as of the 4.3 release is that an upgrade may be done directly from one minor version to another higher minor version (prior releases required an upgrade to each minor version in between).

Major Release

Upgrading to a new major release may require downtime as well as potentially the running of a migration script. Additionally, all clients and servers may need to be upgraded at the same time. This will be determined on a release-by-release basis.

Release Notes

Specific details on issues and their fixes that may impact you can be found here.

Performance

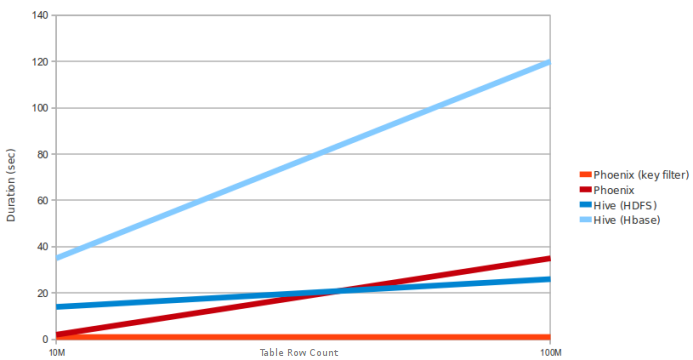

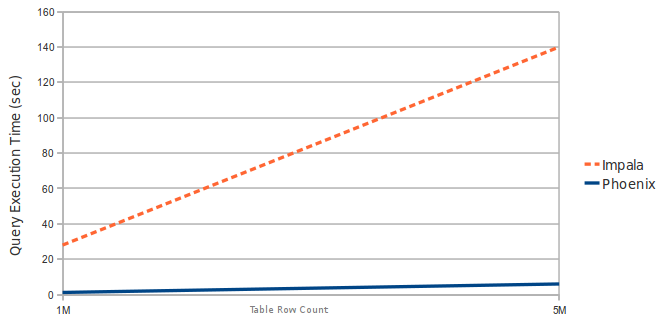

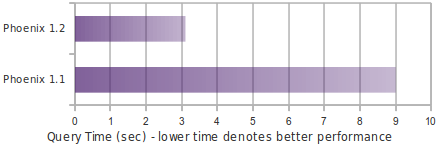

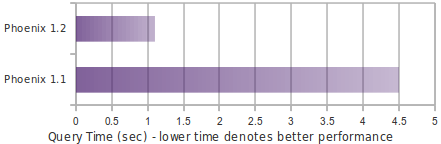

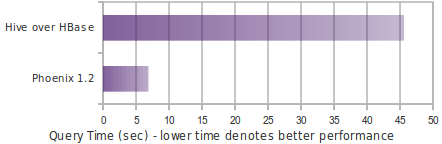

This page has not been updated recently and may not reflect the current state of the project.